Hy End Fed / Scs DSP TNC

Today I bought a Hy End Fed antenna for the bands 10/15/20/40/80. The radiation direction will be NNE North-northeast and SSW South-southwest. Better East and West but unfortunately that is not a option. This Hy End Fed is made by a Dutch company. https://www.hyendcompany.nl

The antenna I bought is this one.

https://www.hyendcompany.nl/antenna/multiband_8040201510m/product/detail/166/HyEndFed_5_Band_AL_Plaat_40_58_mm_MK3

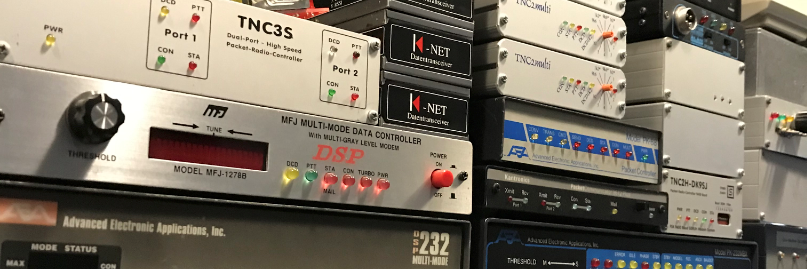

I also bought a nice small modem. The Scs DPS TNC. It very small 🙂 (about 50 euro`s on sort of ebay)

http://www.scs-ptc.com/en/Modems.html

I hope that it will be possible to operate hf packet on the 105net network. Would love to connect a 300Baud link to the 105net network.

Some details about the Hy End Fed MK3

HyEndFed 5 band for 80/40/20/15/10 Meter Max. Power : 200 watt PEP, SSB. Length : 23 meter. Bandwidth 100 KHz at 80 meters, without tuner. Aluminum mounting plate with galvanized clamps. Mast diameter 40 to 58mm. Enclosure : Polycarbonate IP67, 100% UV resistant. SO239 Connector Teflon. All hardware Stainless steel.

Some detials about the ScS DSP TNC

Technical Data •Universal TNC and APRS position tracker with DSP, USB-connection, output to control a switching relay (e.g. to switch the radio's power supply). NMEA input/output for GPS data, bi-coloured LED's, 4 DIP switch for the basic configuration. •Optically isolated USB-connection to the computer, generally well filtered I/O's to avoid hum and susceptibility in HF environments. Metal case. •Use of temperature stabilized oscillator (TCXO) for high reliablilty under all temperature conditions. •10..20 V DC supply power, use of highly efficient internal power regulators. •Mini-Din connector, compatible to the usual transceiver "Packet-Connector". •Currently implemented protocols: Generally AX.25, level 2. Modulations •300 baud AFSK (old HF-Packet standard) with new developed multi-detector: The DSP automatically processes a frequency range of +- 400 Hz looking for 300 bd transmissions and receives all detected signals in PARALLEL. No exact tuning by the user is necessary any more, but always perfect reception! •200/600 baud "HF Robust-Packet", 8-tone PSK, 500 Hz bandwidth, automatic frequency tracking (RX) +- 240 Hz. •1200 baud AFSK (standard Packet-Radio) with special filtering to avoid adjacent channel interference, and degradations by AC hum. •9600/19200 baud direct FSK (G3RUH compatible) with optimized DC removal by the DSP.

Linfbb maintenance scripts modified.

Brain n1uro has write a script to getting reports sent to you nightly from your maintenance.

/usr/local/lib/fbb/script/maintenance/20_epurmess

#!/bin/bash LIBDIR=/usr/local/lib/fbb SYSOP=`/usr/local/sbin/fbbgetconf sysmail` HADD=`/usr/local/sbin/fbbgetconf call` MAIL=/usr/local/var/ax25/fbb/mail/mail.in echo echo "--- Running epurmess" echo $LIBDIR/tool/epurmess ret=$? echo "SP $SYSOP@$HADD" >> $MAIL echo "MSG MAINT at $HADD" >> $MAIL cat /usr/local/var/ax25/fbb/epurmess.res >> $MAIL echo "/EX" >> $MAIL exit $ret

/usr/local/lib/fbb/script/maintenance/20_epurwp

#!/bin/bash LIBDIR=/usr/local/lib/fbb MAIL=/usr/local/var/ax25/fbb/mail/mail.in SYSOP=`/usr/local/sbin/fbbgetconf sysmail` HADD=`/usr/local/sbin/fbbgetconf call` echo echo "--- Running epurwp" echo $LIBDIR/tool/epurwp 40 90 ret=$? echo "SP $SYSOP@$HADD" >> $MAIL echo "WP MAINT at $HADD" >> $MAIL cat /usr/local/var/ax25/fbb/epurwp.res >> $MAIL echo "/EX" >> $MAIL exit $ret

I have some trouble to get things going so i change some line in the scipt.

I have change the line

SYSOP=`/usr/local/sbin/fbbgetconf sysmail` HADD=`/usr/local/sbin/fbbgetconf call`

to

SYSOP=`/usr/local/sbin/fbbgetconf -f /usr/local/etc/ax25/fbb/fbb.conf sysop` HADD=`/usr/local/sbin/fbbgetconf -f /usr/local/etc/ax25/fbb/fbb.conf call`

fbbgetconf needs a option.

Output off the script.

R:180115/0001Z @:PI8LAP.#ZL.NLD.EURO #:20813 [Kortgene] $:20813_PI8LAP

From: PI8LAP@PI8LAP.#ZL.NLD.EURO

To : PD2LT@

1515974462

File cleared : 33 private message(s)

: 5664 bulletin message(s)

: 4665 active message(s)

: 1032 killed message(s)

: 5697 total message(s)

: 8 archived message(s)

: 44 destroyed message(s)

: 0 Timed-out message(s)

: 0 No-Route message(s)

Start computing : 18-01-15 02:01

End computing : 18-01-15 02:01

R:180115/0001Z @:PI8LAP.#ZL.NLD.EURO #:20814 [Kortgene] $:20814_PI8LAP

From: PI8LAP@PI8LAP.#ZL.NLD.EURO

To : PD2LT@

1515974462

WP updated : 249 total record(s)

: 0 updated record(s)

: 1 deleted records(s)

: 0 WP update line(s)

Start computing : 18-01-15 01:01

End computing : 18-01-15 01:01

NetRom Qualities

One of the things that appears to have puzzled Node ops for decades is understanding of NetRom Qualities. A PDF from NEDA(1) drafted in 1994 shows NetRom calculations based off of years of bench testing various settings for diode matrix based TheNet and X1J-4 nodes. While we’ve migrated off Diode Matrix configurations in favor of PC controlled ones we need to make adjustments due to the pounded/hidden backbone link nodes that aren’t in use via axip/axudp linkage.

First lets understand that in the NEDA found quality table anything over a quality of 203 for neighbor links is a statement that the linked node resides physically on your lan. As found by NEDA 228 is a good link for lan based nodes as it will propagate quality to the next hop as 203.

Here BAUNOD links to RSBYPI and it’s link quality is set to 203:

RSBYPI:N1URO-2} Connected to BAUNOD:ZL2BAU-3

r

BAUNOD:ZL2BAU-3} Routes:

Link Intface Callsign Qual Nodes Lock QSO

—- ——- ——— —- —– —- —

> ax0 N1URO-2 203 50 1

BBSURO is a neighbor node to RSBYPI 2 hops away thus it should appear with a derated quality of 181 on BAUNOD:

n bbsuro

BAUNOD:ZL2BAU-3} Routes to: BBSURO:N1URO-4

Which Qual Obs Intface Neighbour

—– —- — ——- ———

> 181 6 ax0 N1URO-2

162 6 ax0 SV1CMG-4

Now let’s visit a node 3 hops away which should appear with a quality of

161:

n mfnos

BAUNOD:ZL2BAU-3} Routes to: MFNOS:N1URO-14

Which Qual Obs Intface Neighbour

—– —- — ——- ———

> 198 6 ax0 SP2L-14

193 6 ax0 SV1CMG-4

161 6 ax0 N1URO-2

Yes it is there but as a tertiary route! This is how and why netrom brakes. It’s not the protocol, it’s the sysops. 198 and 193 are a higher quality and suggests something very wrong. It should appear with a

quality of 181 via SP2L-14 however even if that were true it’d be a secondary path which is false in nature. Let’s look at the other two

paths…

First:

MFNOS:N1URO-14 usa 250 6/B 5 0 0 0 %

2LJNOS:SP2L-14 Area: n1uro

While neighbors, link quality of 250 suggests Poland is handing out N1 calls now since the claim is MFNOS physically is on a lan in Poland. The quality shown at BAUNOD should be shown at 181 since it’s 2 hops via SP2L, and SP2L should show 203. The fact that it’s the primary path is correct being 2 hops vs 3 but it’s quality is being falsely raised due to the link quality of 250 used by SP2L. Think about it this way, if the true host is sending OBS (nodes) broadcasts at a quality of 203, how could it logically be possible to be a higher quality elsewhere?

Let’s look at the secondary route:

r

LAMURO:SV1CMG-4} Routes:

Link Intface Callsign Qual Nodes Lock QSO

—- ——- ——— —- —– —- —

> axudp GB7COW-5 255 168 0

jnos SV1CMG-6 255 781 0

> axip ZL2BAU-3 255 83 1

> radio1 SV1HCC-14 255 154 1

> bpq SV1CMG-7 255 500 0

axudp NA7KR-5 255 3 0

> xnet SV1CMG-3 255 162 0

axip SV1UY-12 255 6 0

> axip OK2PEN-5 255 3 0

axip SV1DZI-11 255 62 0

> tnos SV1CMG-14 255 187 0

> axudp PI1LAP-5 255 34 0

> fbb SV1CMG-3 10 0 0

This I don’t at all understand. It appears Greece now is handing out calls from all over the globe since quality is 255! So now the question is, how does a node (MFNOS) which doesn’t link to LAMURO show a priority path to BAUNOD via LAMURO? This is known as hijacking routes. If SP2L was configured for 203, ZL2BAU would then receive MFNOS at a quality LOWER than the 193 received by SV1CMG-4 -= WHICH DOESN’T HAVE A LINK TO MFNOS!!=- so hopefully now you can see how NetRom paths get hijacked.

Since axip/axudp links don’t use backbone/#alias nodes for internlinking, they’re direct, we then adjust our minqual to reflect such so that we avoid:

– hijacking paths

– spew nodes that are not connectable due to excessive hops

To accomplish this via vanilla NetRom (NOT INP3 / Xnet) the following has been tested to be quite valid for nrbroadcast:

min_obs: 4

def_qual: 203

worst_qual 128

verbose 1

If you have a neighbor with X-net based links then set your verbose on all your interfaces to 0 and worst_qual to 202, or if you have a neighbor on the same link interface running link qualities NOT equal to the same mathematical calculations set your verbose to 0 AND raise your worst_qual to 202 to reject falsely raised qualities from infesting your nodes tables.

*Keep in mind this as well; a user on HF (aka: 300 baud) is most likely going to time out off of your node if you have more than a screen worth of CONNECTABLE nodes… and having a nodes table of truly connectable nodes will bring credibility to your system and those end users you may get to visit will appreciate the integrity of your network.

I’ve had some netrom based queries lately so I hope this answers questions others have had. When the protocol is treated properly by sysops it’s not a bad dynamic routing protocol but when the humans abuse it… then it becomes troublesome.

(1) http://n1uro.ampr.org/neda/neda_annual_v005_1994.pdf

Brian n1uro

SystemD and Uronode

It`s also possible to run uronode with Systemd.. The systemd files can be found is the source directory of uronode. /uronode-2.8.1/systemd/

A sort list what to do.

- copy the SystemD files into /lib/systemd/system - run: systemctl enable uronode - run: chkconfig uronode on - run: systemctl daemon-reload - in /etc/xinetd.d/uronode (or node) set disable to yes (or comment out your line in /etc/inetd.conf) - run: systemctl restart xinetd - reboot

The systemd files unit files

/uronode-2.8.1/systemd/uronode.service

[Unit] Description = URONode Server Requires = uronode.socket After=syslog.target network.target [Service] Type=oneshot ExecStartPre=systemctl start uronode.socket ExecStart=/usr/local/sbin/uronode ExecStartPost=systemctl restart uronode.socket StandardInput=socket Sockets=uronode.socket [Install] Also = uronode.socket WantedBy = multi-user.target WantedBy = network.target

The uronode.socket

/uronode-2.8.1/systemd/uronode.socket

[Unit] Description=URONode Server Activation Socket [Socket] ListenStream=0.0.0.0:3694 Accept=yes [Install] WantedBy=sockets.target

The uronode@.service

/uronode-2.8.1/systemd/uronode@.service

[Unit] Description = URONode Server Requires = uronode.socket After=syslog.target network.target [Service] Type=oneshot ExecStart=/usr/local/sbin/uronode StandardInput=socket Sockets=uronode.socket [Install] Also = uronode.socket WantedBy = multi-user.target WantedBy = network.target

You can also start the ax25 system with Systemd.

Copy the ax25.system file /uronode2.8.1/systemd to the /lib/systemd/system directory

[Unit] Description=ax25 service After=network.target syslog.target [Service] Type=oneshot ExecStart=/usr/local/bin/ax25 start ExecReload=/usr/local/bin/ax25 restart ExecStop =/usr/local/bin/ax25 stop [Install] WantedBy=multi-user.target

systemctl enable ax25.system systemctl daemon-reload systemctl start ax25

Systemd / Systemctl and Linbpq

Update : Okay, i relay dont like systemctl….

apt-get install sysvinit / apt-get install openbsd-inetd / apt-get purge systemd / reboot

Debian Jessie uses the “new” systemd. No more inittab and inetd.conf. So a unit file must come up for this.

nano /etc/systemd/system/linbpq.service

[Unit] Description=Linbpq start After=network.target [Service] Type=simple ExecStart=/usr/local/linbpq/linbpq WorkingDirectory=/usr/local/linbpq Restart=always [Install] WantedBy=multi-user.target Alias=linbpq.service

systemctl enable linbpq.service

systemctl daemon-reload

systemctl start linbpq.service

Now let`s check all startup nicely.

systemctl status linbpq.service

root@gw:/etc/systemd/system# systemctl status linbpq.service

● linbpq.service - Linbpq daemon

Loaded: loaded (/etc/systemd/system/linbpq.service; enabled)

Active: active (running) since Wed 2017-12-13 07:14:07 CET; 1h 19min ago

Main PID: 19267 (linbpq)

CGroup: /system.slice/linbpq.service

└─19267 /usr/local/linbpq/linbpq

Up and running

Update Debian Wheezy to Jessie

Last night I updated the system to Debian Jessie. This did not go without a struggle. But we are online again.

Upgrade Debian Wheezy to Jessie safely

First make a complete backup of your system. I use rsync for this and put the backup on a remote vps.

rsync -uavzh --exclude='/mnt' --exclude='/proc' --exclude='/sys' --delete-after /

user@server-ip-addr:/backup/gw-pd2lt

Make sure that your system is completely updated.

apt-get update

apt-get upgrade

apt-get dist-upgrade

Update the sources.list for Jessie

nano /etc/apt/sources.list

deb http://ftp.de.debian.org/debian/ jessie main contrib non-free deb-src http://ftp.de.debian.org/debian/ jessie main contrib non-free deb http://httpredir.debian.org/debian jessie-updates main contrib non-free deb-src http://httpredir.debian.org/debian jessie-updates main contrib non-free deb http://security.debian.org/ jessie/updates main contrib non-free deb-src http://security.debian.org/ jessie/updates main contrib non-free

After this run a update again

apt-get update

apt-get upgrade

apt-get dist-upgrade

Okay now reboot (thumbs crossed)

root@gw:# cat /etc/os-release PRETTY_NAME="Debian GNU/Linux 8 (jessie)" NAME="Debian GNU/Linux" VERSION_ID="8" VERSION="8 (jessie)" ID=debian HOME_URL="http://www.debian.org/" SUPPORT_URL="http://www.debian.org/support" BUG_REPORT_URL="https://bugs.debian.org/" root@gw:#

You will probably encounter some problems. I had some problems with apache2, but after some searching, he is also up and running again.

Follow up : Setup new System on PI1LAP

This is a follow up on this post

http://packet-radio.net/2017/12/setup-new-system-on-pi1lap/

Today the SSD’s are installed in the PC. I ran into quite a few problems. Hardware-based raid is not possible with the PCI card and SSDs. Now the mainboard can also raid. Unfortunately, the SSDs are not recognized here. Cheap is expensive. So now over to software raid. Software raid runs perfectly. But here I have to hand in speed.

Here below the benchmark of the old sata hard disks. The old sata 80Gb disks were in Hardware-based raid. Cheap pci card from Delock. Both systems use raid 1.

I use the same command for both tests.

./fio --randrepeat=1 --ioengine=libaio --direct=1 --gtod_reduce=1 --name=test --filename=test / --bs=4k --iodepth=64 --size=4G --readwrite=randrw --rwmixread=75

Starting 1 process Jobs: 1 (f=1): [m] [100.0% done] [1504KB/388KB/0KB /s] [376/97/0 iops] [eta 00m:00s] test: (groupid=0, jobs=1): err= 0: pid=8223: Thu Dec 7 08:54:51 2017 read : io=3071.7MB, bw=498349B/s, iops=121, runt=6463092msec write: io=1024.4MB, bw=166188B/s, iops=40, runt=6463092msec cpu : usr=0.11%, sys=0.58%, ctx=1035400, majf=0, minf=6 IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0% submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0% complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0% issued : total=r=786347/w=262229/d=0, short=r=0/w=0/d=0 latency : target=0, window=0, percentile=100.00%, depth=64 Run status group 0 (all jobs): READ: io=3071.7MB, aggrb=486KB/s, minb=486KB/s, maxb=486KB/s, mint=6463092msec, maxt=6463092msec WRITE: io=1024.4MB, aggrb=162KB/s, minb=162KB/s, maxb=162KB/s, mint=6463092msec, maxt=6463092msec Disk stats (read/write): sda: ios=782814/264536, merge=3607/1804, ticks=309674428/102480492, in_queue=412156584, util=100.00%

Here you see a read of 121 iops (Input/Output Operations per Second) and a write of 40 iops.

Now here is a benchmark with the ssd’s.

Starting 1 process

test: Laying out IO file(s) (1 file(s) / 4096MB)

Jobs: 1 (f=1): [m] [100.0% done] [19988KB/6481KB/0KB /s] [4997/1620/0 iops] [eta 00m:00s]

test: (groupid=0, jobs=1): err= 0: pid=29862: Sat Dec 9 04:51:12 2017

read : io=3071.7MB, bw=15291KB/s, iops=3822, runt=205708msec

write: io=1024.4MB, bw=5099.6KB/s, iops=1274, runt=205708msec

cpu : usr=2.56%, sys=14.12%, ctx=214110, majf=0, minf=5

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=0.1%, >=64=100.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.1%, >=64=0.0%

issued : total=r=786347/w=262229/d=0, short=r=0/w=0/d=0

latency : target=0, window=0, percentile=100.00%, depth=64

Run status group 0 (all jobs):

READ: io=3071.7MB, aggrb=15290KB/s, minb=15290KB/s, maxb=15290KB/s, mint=205708msec, maxt=205708msec

WRITE: io=1024.4MB, aggrb=5099KB/s, minb=5099KB/s, maxb=5099KB/s, mint=205708msec, maxt=205708msec

Disk stats (read/write):

md0: ios=786158/262297, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=392956/262257, aggrmerge=217/165, aggrticks=4046074/3411330, aggrin_queue=7456728, aggrutil=91.99%

sda: ios=393413/262260, merge=238/162, ticks=4029372/3405552, in_queue=7434076, util=91.15%

sdb: ios=392499/262254, merge=197/168, ticks=4062776/3417108, in_queue=7479380, util=91.99%

Here you can see that the iops are much more. So we make progress. It`s not much iops of 3800 for a ssd. But probably is the software raid the cause. And the old system perhaps.

md0 is the array and the sda and sdb are the ssd disk in the array.

I have read several tests about SSD hard disks. If I write 10Gb to the SSD per day, I can probably do 209 years with it. Let’s take 5% of that, is still more then 10 years. But yes you never know with a hard disk.

mdadm is running in the background to monitor the array. Is there something wrong I get a email.

This is a nice website about software raid.

https://raid.wiki.kernel.org/index.php/RAID_setup

Forward from linbpq through uronode to fbb.

There were some problems getting the forward from linbpq through a uronode to a linfbb bbs. I spent a while testing to see if we could get things going. It actually works pretty well.

I have add the following connection script to linbpq

ATT 3 C 44.137.31.73 3694 NEEDLF PI8LAP pi8lap BBS

ATT 3 stands for attach port 3, and port 3 is in my system the telnet port.

Furthermore, in uronode.conf I have created an Alias with the name BBS. So if the command BBS is given in uronode, you will be connected with linfbb.

Alias BBS "c pi8lap"

We can test whether the forward script does what it is supposed to do. Let’s start the forward in Linbpq.

Log file of Linbpq

171209 05:36:28 >PI8LAP ATT 3

171209 05:36:28 <PI8LAP LAPBPQ:PI1LAP-9} Ok

171209 05:36:28 >PI8LAP C 44.137.31.73 3694 NEEDLF PI8LAP pi8lap BBS

171209 05:36:28 <PI8LAP *** Connected to Server

171209 05:36:28 <PI8LAP ��"(uro.pd2lt.ampr.org:uronode) login: *** Password required!

171209 05:36:28 <PI8LAP If you don't have a password please mail

171209 05:36:28 <PI8LAP pd2lt (@) packet-radio.net for a password you wish to use.

171209 05:36:28 <PI8LAP Password: ��^A��^A

171209 05:36:28 <PI8LAP [URONode v2.8.1]

171209 05:36:28 <PI8LAP Welcome pi8lap to the uro.pd2lt.ampr.org packet shell.

171209 05:36:28 <PI8LAP Network node PI1LAP is located in Kortgene, Zeeland, JO11VN Regio 33

171209 05:36:28 <PI8LAP

171209 05:36:28 <PI8LAP https://packet-radio.net / pd2lt@packet-radio.net

171209 05:36:28 <PI8LAP

171209 05:36:28 <PI8LAP {BBS} Linfbb V7.0.8-beta4 (pi8lap)

171209 05:36:28 <PI8LAP {DX} DXSpider V1.55 build 0.196 (pi1lap-4)

171209 05:36:28 <PI8LAP {FPac} Fpac node 4.0.0 (pi1lap-7)

171209 05:36:28 <PI8LAP {JNos} Jnos2.0k1 (pd2lt)

171209 05:36:28 <PI8LAP {Xnet} Xnet v1.39 (pi1lap)

171209 05:36:28 <PI8LAP {RMS} Winlink Gateway 2.4.0-182 (pi1lap-10)

171209 05:36:28 <PI8LAP {BPQ} Linbpq 6.0.13.1 (pi1lap-9)

171209 05:36:28 <PI8LAP {CHat} Linbpq chat (pi1lap-6)

171209 05:36:28 <PI8LAP {COnv} WWconvers saup-1.62a

171209 05:36:28 <PI8LAP

171209 05:36:28 <PI8LAP pi8lap@uro.pd2lt.ampr.org-IPv6: Trying pi8lap ... <Enter> aborts.

171209 05:36:28 <PI8LAP *** connected to pi8lap

171209 05:36:29 <PI8LAP [FBB-7.0.8-AB1FHMRX$]

171209 05:36:29 <PI8LAP Hallo Niels, welkom.

171209 05:36:29 <PI8LAP 1:PI8LAP-BBS>

171209 05:36:29 >PI8LAP [BPQ-6.0.13.1-B1FIHJM$]

171209 05:36:29 >PI8LAP FF

Okay looks good.